Hi, I'm Kenneth. Welcome to this week's edition of Tech Venture Navigator.

Last week we covered the SaaSpocalypse, Claude Opus 4.6, and the $55B January. This week, the picture sharpened into something more consequential.

OpenAI quietly walked back $1.4 trillion in infrastructure promises to $600 billion, told investors it burned $8 billion in 2025, and is now laying the groundwork for a $1 trillion IPO. Google's startup chief publicly declared two entire categories of AI startups dead on arrival. Fei-Fei Li pulled in $1 billion for spatial AI. Grok 4.20 launched with a multi-agent architecture that beat every other model in a live trading competition. And the smartest VC frameworks on defensibility dropped this week from Andre Zumbuhl and Foundation Capital, both asking the same question I've been asking every founder who pitches LateFund: when the integration layer is free and the models are commoditized, what exactly are you selling?

Capital is concentrating at the extremes. The middle is being hollowed out. And the companies that survive the next 18 months will be the ones that understood this week's signals.

Let's break it down.

Brought to you by Lemlist - The Leading Outreach Tool

📊 The Month That Shook Software (The Benchmarks)

I. OpenAI Cuts Compute Target to $600B, Eyes $1T IPO

This was the most important story of the week for anyone allocating capital to AI.

On Friday, CNBC and Reuters reported that OpenAI is now targeting roughly $600 billion in total compute spend by 2030. That's less than half the $1.4 trillion Sam Altman was publicly touting months ago when he talked about building 30 gigawatts of computing resources.

The numbers that matter:

$13.1B revenue in 2025 (beat the $10B target)

$8B burned in 2025 (under the $9B target, but still enormous)

Gross margin dropped from 40% to 33% as inference costs quadrupled

$280B projected 2030 revenue, split evenly between consumer and enterprise

900M weekly active users on ChatGPT (up from 800M in October)

1.5M weekly active users on Codex (competing directly with Claude Code)

$100B+ funding round in progress, ~90% from strategic investors

Nvidia in talks for $30B at a $730B pre-money valuation

IPO being prepared that could value OpenAI at up to $1 trillion

The $600B number is OpenAI connecting spending to revenue in a way public market investors can actually underwrite. The $1.4 trillion was a vision statement. The $600 billion is a business plan.

The Information also reported this week that OpenAI's inference expenses quadrupled in 2025, which is the number nobody is talking about loudly enough. Revenue can double and your business can get worse if your cost-to-serve triples. That's exactly what happened.

Source: CNBC

✈️ NAVIGATOR’S EDGE:

For founders: OpenAI's margin compression (40% to 33%) as it scales is a warning for every AI company building on API calls. Revenue growth means nothing if inference costs eat your gross margin. If you're pitching investors and your unit economics assume today's API pricing, you're building on sand. Model your costs at 3-4x current levels before you walk into the room.

For investors: The fact that OpenAI is walking back its capex commitments before an IPO tells you the public markets won't tolerate "spend now, figure it out later." This is the maturation signal for the entire AI sector. The question in every AI deal you evaluate should now be: what happens to this company's margins when it has to serve 10x the users?

For LPs: A $1T IPO for OpenAI would be the single largest liquidity event in venture history. If you have exposure through SoftBank, Microsoft, Thrive, or any earlier round participant, the exit clock just started ticking. The SpaceX IPO ($1.5T target for June/July) creates a secondary liquidity event in the same window. 2026 is shaping up as the most consequential year for tech IPOs since 2012.Kenneth Kelly

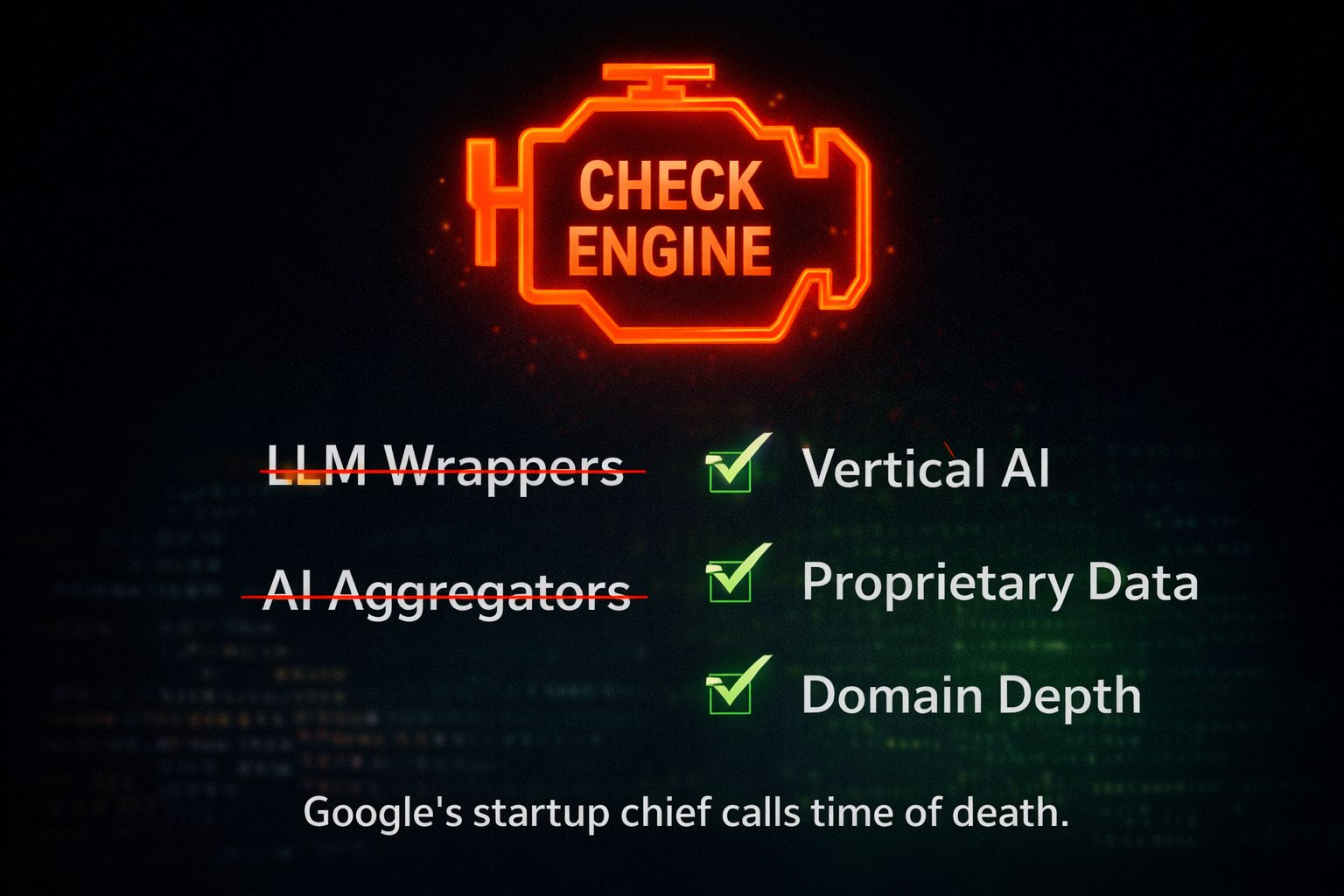

II. Google VP Declares Two AI Startup Categories Dead

This landed Friday via TechCrunch's Equity podcast and went viral over the weekend. Darren Mowry, VP of Google's global startup organization (spanning Cloud, DeepMind, and Alphabet), said two of the most popular AI business models have their "check engine light" on.

Category 1: LLM Wrappers. Startups that layer a product or UX on top of existing models (Claude, GPT, Gemini) to solve a specific problem. The AI study helper. The generic writing assistant. The customer service chatbot that's 100% dependent on the underlying model for its value.

Category 2: AI Aggregators. The subset that routes queries across multiple models through a single API with monitoring and eval tooling. Think multi-model hubs or orchestration-layer businesses.

Mowry compared the current moment to early cloud computing. In the late 2000s, startups resold AWS infrastructure with better tooling. Then Amazon built those features directly. Most were wiped out. Only the ones that added genuine services survived.

What survives the shakeout:

Deep vertical specialization (Cursor for coding, Harvey for legal)

Proprietary data moats that compound with usage

Products where the model is a component, not the product itself

Vibe coding platforms (Replit, Lovable, Cursor all had record years in 2025)

He also flagged biotech and climate tech as areas where startups can build real differentiation because of the data complexity involved.

Source: TechCrunch

✈️ NAVIGATOR’S EDGE: This is the warning I've been giving founders in our deal flow for six months. When a Google VP says it publicly on a podcast, you're already late.

The test: Could your product be replicated in an afternoon using the next model's API release? If yes, you don't have a company. You have a feature. The startups in our pipeline at LateFund that survive are the ones where the model is 20% of the value and the remaining 80% is domain expertise, proprietary data, workflow integration, and distribution.

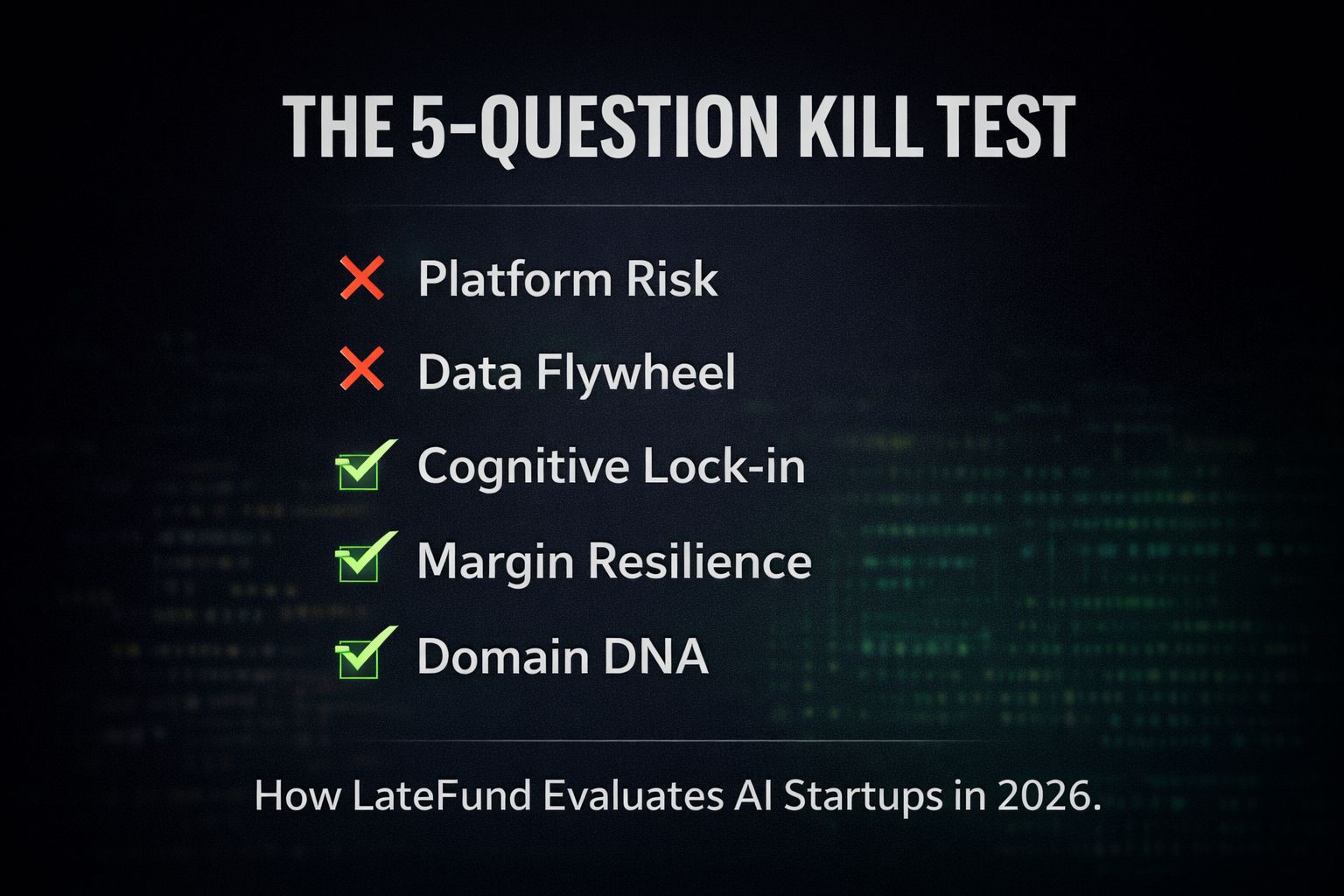

III. The Kill Test: 5 Questions I Now Ask Every AI Startup That Pitches LateFund

Recommended reading: Data Driven VC published an excellent defensibility framework this week that every investor should read. Foundation Capital also released their 2026 predictions covering similar territory. Both are worth your time.

Here's my take on the same question, shaped by what I'm seeing on the ground as a fund manager running deal flow across pre-seed AI and B2B SaaS.

I've reviewed over 300 AI startup pitches in the past 12 months. At least half were effectively wrappers with nice pitch decks. The Google obituary this week validated what I've been feeling in every screening call: the bar has fundamentally shifted. So I built a kill test. Five questions. If a founder can't handle all five, we don't proceed.

Question 1: "If Anthropic, OpenAI, or Google ships your core feature as a native capability next Tuesday, what happens to your business?"

This is the platform risk question, and most founders fumble it. They say things like "we have first-mover advantage" or "our UX is better." Neither is a moat. UX is a 6-week copy job. First-mover advantage in AI lasts about as long as the gap between model releases.

The right answer sounds like: "Our value isn't the AI capability. It's the 14 months of compliance workflows we've mapped for pharmaceutical clinical trials, the regulatory templates we've validated with 30 enterprise customers, and the audit trail infrastructure that took 8 months to build. A better model makes all of that more powerful, not less relevant."

That's Harvey's answer. That's Cursor's answer. That's the answer from every company Google's Mowry pointed to as a survivor.

Question 2: "Show me the data asset that gets more valuable with every customer interaction."

I stole this concept from my own experience building iPPi. We had a PropTech analytics platform where every property valuation our users ran made the next valuation more accurate. Not because we were training on their data (privacy matters), but because the system learned which data sources, which weighting models, and which local market adjustments produced outputs that users actually trusted.

The AI startups I fund need the same dynamic. Not just "we use AI." Instead: "Every time a compliance officer uses our tool and edits the output, we learn what 'correct' looks like for that specific regulatory environment. After 6 months with a client, our error rate drops 40%. After 12 months, 70%. A competitor starting fresh with the same model can't replicate that learning without those same 12 months."

That's the compounding data flywheel. It's the single most underpriced moat in AI right now.

Question 3: "What happens to your customer's business if they try to rip you out after 18 months?"

Switching cost used to mean "your data is locked in our proprietary format." That's dead. MCP, open APIs, and standardized data formats killed it.

The new switching cost is institutional memory. If a legal team has spent 18 months teaching your system how they actually review contracts (not textbook contract review, but the specific way their partners think about risk allocation, the clauses they always negotiate, the formatting their clients expect), ripping you out means losing all of that accumulated intelligence. A new tool connects to the same data in a day. Relearning those preferences takes another 18 months.

I want to hear founders articulate this. If switching costs are purely technical (data format, integration complexity), I pass. If switching costs are cognitive (learned institutional behavior that took months to build), I lean in.

Question 4: "Can you show me unit economics that survive a 5x increase in model costs?"

This is the OpenAI lesson from this week, applied directly. If your margins depend on today's API pricing, you don't have margins. You have a temporary subsidy from model providers who are burning billions and haven't figured out their own pricing yet.

I want to see startups that charge on outcomes, not on compute. A legal AI that charges per contract reviewed, not per API call. A compliance tool that charges per audit completed. A sales intelligence platform that charges per qualified lead. When you're selling outcomes, model cost increases are your problem to optimize, not an existential threat.

The founders who can demonstrate this pricing discipline at pre-seed stand out immediately, because 90% of their competitors haven't thought about it.

Question 5: "Who on your team has done the job your AI is replacing?"

This is the one that filters fastest. The best vertical AI companies are built by people who spent years doing the work manually. They know the edge cases. They know which 20% of the workflow accounts for 80% of the value. They know what "good" looks like in a way that no amount of prompt engineering from a generalist team can replicate.

Harvey was built by lawyers. Cursor was built by engineers who spent years in the IDE. The clinical AI companies getting real traction are founded by physicians, not computer scientists who read a few papers.

When a team of three Stanford CS grads tells me they're going to revolutionize construction project management with AI, my first question is: "Has anyone on this team ever managed a construction project?" If the answer is no, the product will be technically impressive and commercially worthless, because they'll solve the wrong problems elegantly.

✈️ NAVIGATOR’S EDGE: I published this kill test because I think the market needs it. Too much capital is flowing into wrappers with good stories. Google's Mowry just confirmed the obvious. Gartner says only ~130 of the hundreds of "agentic AI" vendors are legitimate, and 40%+ of agentic projects will be canceled by 2027 because the economics don't work.

If you're a founder and you can survive all five questions, reach out. We're actively deploying. If you're an investor and you want to compare notes on deal evaluation frameworks, my DMs are open.

IV. Fei-Fei Li's World Labs Raises $1B for Spatial AI

The "godmother of AI" raised one of the largest rounds of the year for a thesis that moves beyond language entirely.

World Labs pulled in $1 billion from AMD, Nvidia, Autodesk ($200M lead), Andreessen Horowitz, Emerson Collective, Fidelity, and Sea. Valuation reportedly around $5 billion.

Li's thesis, in her own words: "If AI is to be truly useful, it must understand worlds, not just words. Worlds are governed by geometry, physics, and dynamics."

Their product Marble lets users generate persistent, editable 3D environments from images, video, or text prompts. Not just images of 3D worlds, but actual navigable, modifiable environments with consistent physics.

Autodesk's $200M investment and advisory role is the commercial signal. Autodesk's chief scientist told TechCrunch the partnership could see customers starting with a world-model-based sketch in Marble (say, an office layout) and then drilling down into detailed design elements using Autodesk's tools. If this integration ships, every architect, engineer, and game designer on the planet becomes a potential user.

The "world models" thesis is converging from multiple directions simultaneously: Fei-Fei Li (World Labs), Yann LeCun (AMI Labs, left Meta, reportedly targeting $5B valuation), Google DeepMind (Genie), and Runway (GWM-1, raised $315M this same week at $5.3B from General Atlantic, Nvidia, AMD, Adobe, and Fidelity). Sources: Bloomberg

✈️ NAVIGATOR'S EDGE: For founders building in 3D, spatial, or physical AI: this round repriced your sector upward permanently. World Labs at $5B on a single product validates the category. But the Autodesk partnership is the real lesson. Spatial AI companies need enterprise distribution partners, not just better models. If you're building world models without a clear path to integration with existing creative or industrial workflows, you're building a research lab, not a company.

For investors: the spatial AI stack is forming fast. Compute layer (Nvidia, AMD), model layer (World Labs, Runway, DeepMind), application layer (Autodesk, Unity, game studios). The investable gap right now is the tooling that sits between model and application, the picks and shovels of the 3D AI era. That's where pre-seed capital should be looking.

Seven major model releases in seventeen days. The capability curve isn't slowing. It's accelerating.

Model | Company | Date | Key Feature |

|---|---|---|---|

Claude Sonnet 5 | Anthropic | Feb 3 | 94% accuracy on complex workflows |

GPT-5.3-Codex | OpenAI | Feb 5 | Agentic coding, 25% faster than 5.2 |

Claude Opus 4.6 | Anthropic | Feb 6 | Agent teams, million-token context |

GPT-5.3-Codex-Spark | OpenAI | Feb 12 | 1,000+ tokens/sec on Cerebras |

GLM-5 | Zhipu | Feb 11 | 744B parameters, open-source |

MiniMax-M2.5 | MiniMax | Feb 12 | 230B parameters, open-source |

Grok 4.20 | xAI | Feb 17 | 4-agent collaboration, 4.2% hallucination |

Source: NextBigFuture model tracker

What this means in practice: Your "AI advantage" has a half-life of about six weeks. If your moat is model capability, it's not a moat. If your moat is data, workflow integration, and distribution, the model improvements make your product better instead of obsoleting it. This is exactly why the kill test matters: the companies that pass it get stronger with every model release. The ones that don't get weaker.

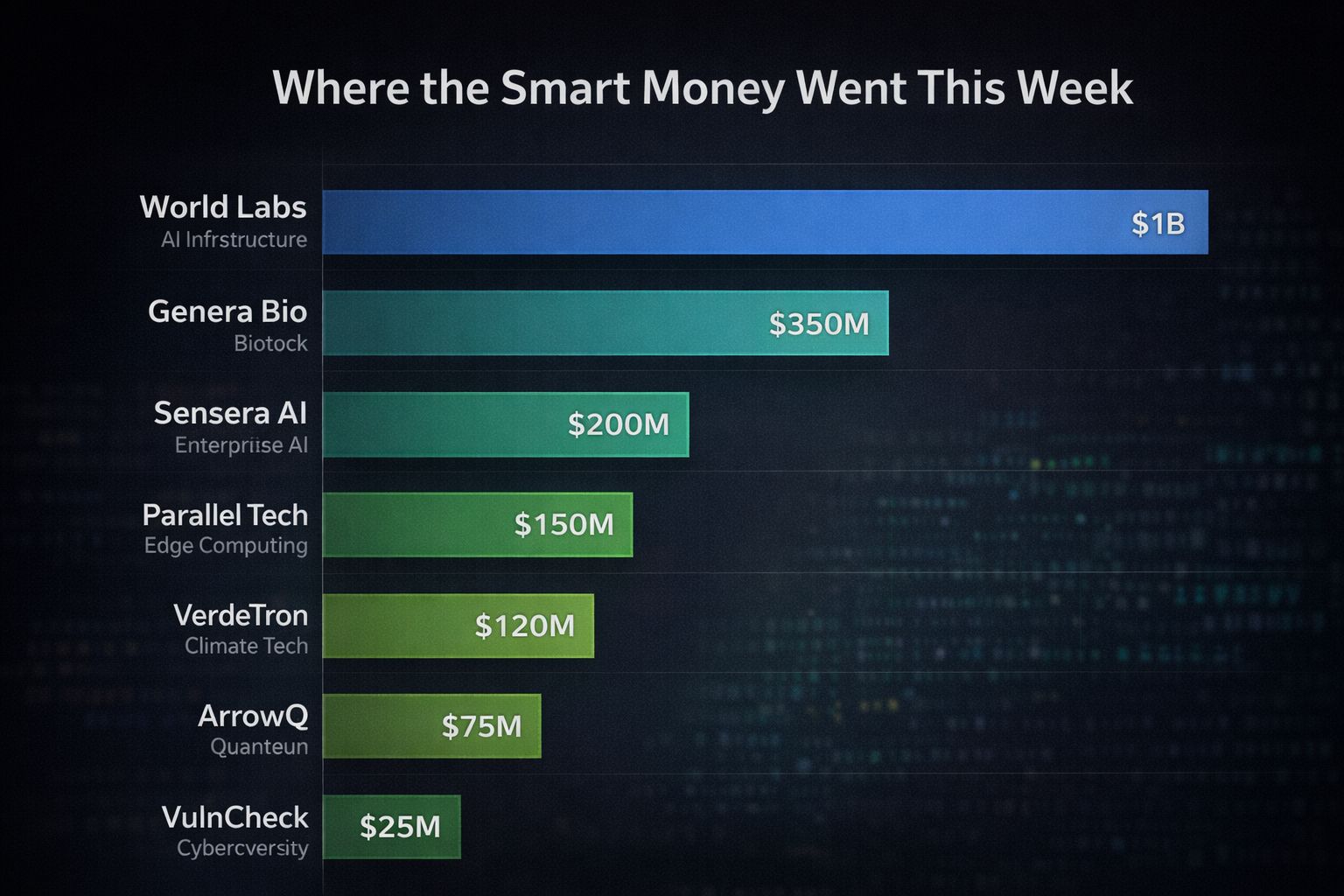

Funding Radar: Week of Feb 17-23

Company | Amount | Stage | Sector | Lead Investors |

|---|---|---|---|---|

World Labs | $1B | Growth | Spatial AI | Autodesk ($200M), AMD, Nvidia, a16z |

Vestwell | $385M | Series E | Fintech / Retirement | - |

Axiom Space | $350M | Series D | Space Tech | Type One Ventures, QIA |

Runway | $315M | Series E | AI Video / World Models | General Atlantic, Nvidia, AMD |

Temporal | $300M | Series D | Dev Infrastructure | a16z, Lightspeed, Sapphire |

Utility Global | $100M | Series D | Clean Energy / Hydrogen | Ara Partners, APG |

ChipAgents | $50M | - | AI Chip Design | - |

Ownwell | $50M | - | AI Property Tax | - |

VulnCheck | $25M | Series B | Cybersecurity |

Source: Crunchbase | TechStartups

✈️ The pattern: Capital is flowing to infrastructure (Temporal, Axiom), physical AI (World Labs, Runway), deep verticals (ChipAgents, VulnCheck, Ownwell), and clean energy (Utility Global). Conspicuously absent: generic SaaS and LLM wrappers. Google's Mowry was right, and the funding data this week proves it in real time.

Lucas Swisher on How Mega Funds Can Still Do 5x Returns & Why Big Markets are the Most Important

Oren Zeev runs Zeev Ventures: $1B+ AUM, $0 in management fees, no associates, no partners, no IC. Portfolio: Audible, Houzz, Navan, Tipalti.

His central insight: "If the company looks weird or wrong, there probably won't be 15 other startups doing it. You will have 2-3 years without real competition and can build a real moat."

Head of Claude Code: What happens after coding is solved | Boris Cherny

Boris Cherny is the creator and head of Claude Code at Anthropic. What began as a simple terminal-based prototype just a year ago has transformed the role of software engineering and is increasingly transforming all professional work.

Claude Opus 4.6 — Agent teams, 1M token context, PowerPoint integration. Available now across all platforms.

Marble (World Labs) - Generate persistent 3D environments from text, images, or video. Free tier available. If you're in gaming, VFX, VR, or architecture, worth testing now.

Temporal - Durable execution for AI agent workflows. If you're deploying autonomous agents that need to survive failures, this is the infrastructure layer. Just raised $300M from a16z.

Framer - YC-backed. Ship production-ready sites in hours. CMS, analytics, AI localization. No dev team needed.

Attio - The CRM for startups and VCs who want clean, modern tooling. Built for how teams actually work now.

Q1 2026 earnings season for software companies. The metric: "Agentic Revenue Transition," not seat growth

SpaceX IPO prep signals as the June/July window approaches

EU AI Act countdown (fully applicable August 2026, 6 months out)

OpenAI $100B+ round finalisation, including Nvidia's $30B stake

SaaS ETF (IGV) looking for a floor after dropping 23% YTD

The Big Number: $7.5 TrillionThat's the total value of the Crunchbase Unicorn Board at close of 2025, up $2 trillion from 2024. Five companies (OpenAI, Scale AI, Anthropic, Project Prometheus, xAI) raised $84 billion alone, representing 20% of all global venture capital in 2025. In the first 8 weeks of 2026, 17 more US AI startups have crossed the $100M threshold. (TechCrunch)

60% of all invested capital went to 629 companies raising $100M+ rounds. More than a third went to just 68 companies raising $500M+. (Crunchbase)

Capital concentration is the defining dynamic of 2026 venture. And it's accelerating.

We sit at the center of 350k+ founders, operators, and LPs. Our goal is to eliminate the "random walk" of fundraising by using our data to make the one warm connection that actually matters.

For Founders: If you are raising a Seed or Series A and your roadmap is built for the agentic era, we want to see it. We don't just invest; we plug you into the distribution loops of our entire community. 👉 Startup Intake Form: Apply here

For Investors: Stop digging through generic deal flow. Tell us exactly what "high-signal" looks like for your mandate, and we'll route the outliers directly to your inbox. 👉 Investor Intake Form: Apply here

Fundraising in 2026 is a game of conviction and speed.

Let's stop wasting time on "maybes" and start building rounds that move the needle.

Thanks for reading this edition. If you got value from it, share it with a founder, teammate, or friend who’d use it too it keeps this community sharp and growing. Not for you? You can always update your preferences anytime.

Want more practical ideas on how to raise smarter and build better?

Join the crew below and follow me on LinkedIn to never miss an update.

If you’d like tailored help you can book time with me on hubble here.

Stay going,

— Kenneth Kelly

Founder @Tech Venture Navigator

What do you think about Tech Venture Navigator?

💡 Love it | 🔥 Useful | 👍 Solid | 🤷♂️ Okay | 🚫 Skip it

or Leave feedback here.

Got ideas, topics, or frameworks you’d love to see covered? Just hit reply or drop me a message, we read every note.